Latest Version 2.0.5

March 1, 2022

This app is archived. App archiving documentation

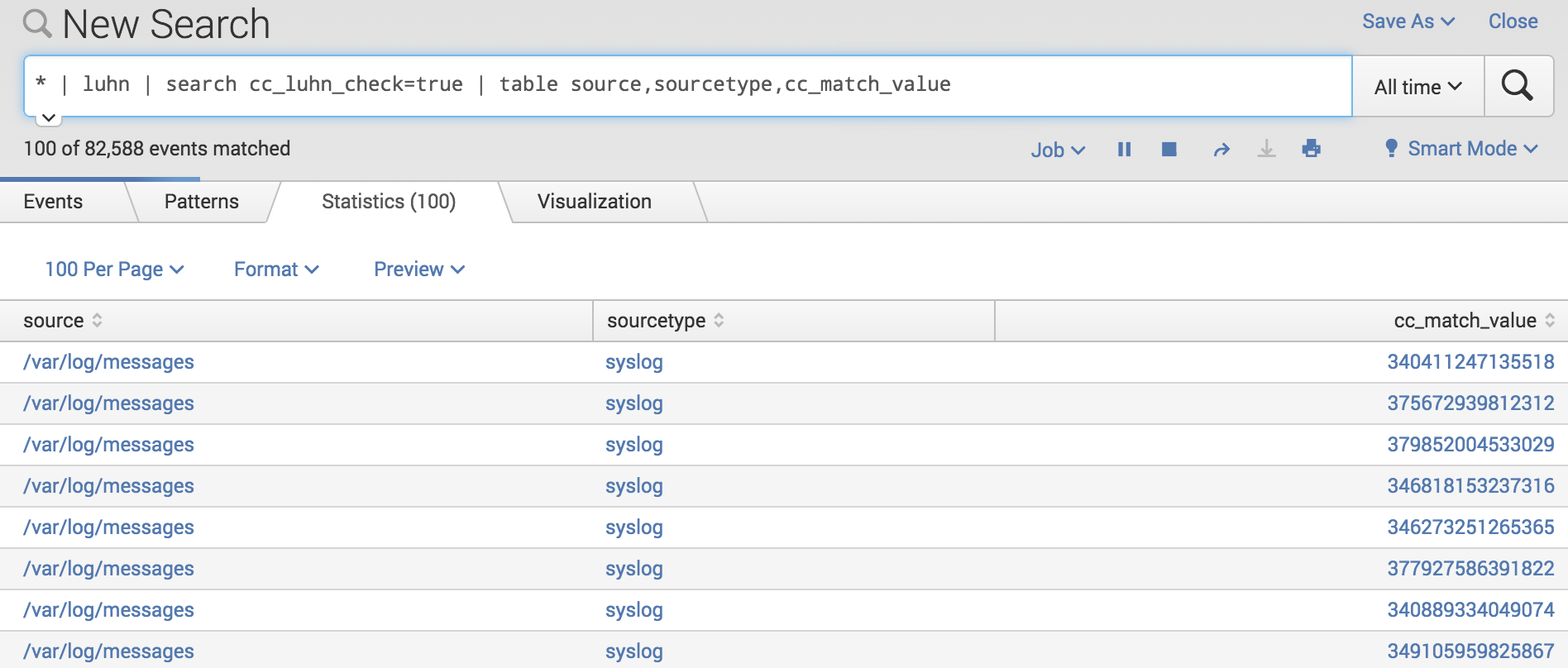

This add-on is intended to add a search native capability to find possible credit card numbers, validated with a LUHN algorithm check, in your logs by adding a new command called "luhn".

(1)

Categories

Created By

Type

Downloads

Licensing

GNU GPL 3.0(Opens new window)Splunk Answers

Ask a question about this app listing(Opens new window)Resources